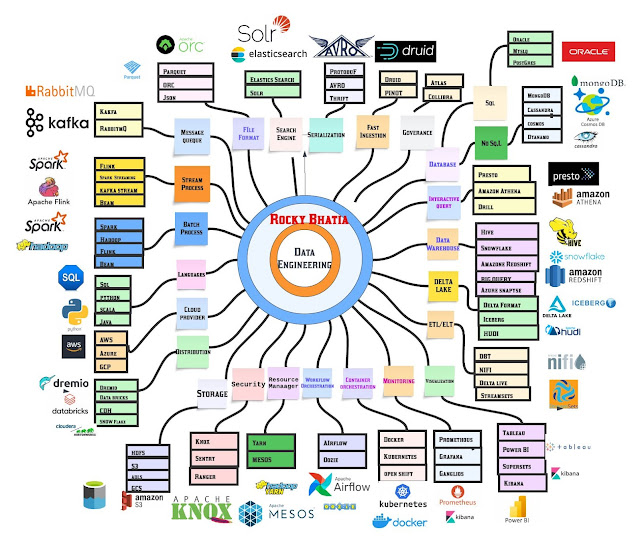

Data engineering ecosystem

𝗗𝗮𝘁𝗮 𝗘𝗻𝗴𝗶𝗻𝗲𝗲𝗿𝗶𝗻𝗴 Involves a rich understanding of large distributed systems on which data solutions rely.

it makes it possible to take vast amounts of data, translate it into insights, and focus on the production readiness of data and things like formats, resilience, scaling, and security.

𝗣𝗿𝗼𝗰𝗲𝘀𝘀𝗶𝗻𝗴

𝗕𝗮𝘁𝗰𝗵 - processing a large volume of data all at once.

Hadoop

Spark

𝗦𝘁𝗿𝗲𝗮𝗺- processing of a continuous stream of data immediately as it generates.

Flink

Spark Streaming

𝗗𝗮𝘁𝗮𝗯𝗮𝘀𝗲𝘀: SQL and NoSQL their schemas are predefined or dynamic, how they scale, the type of data they include and whether they are more fit for multi-row transactions or unstructured data.

𝗦QL

Oracle

mysql

𝗡𝗼𝘀𝗾𝗹

MongoDB

Hbase

𝗗𝗮𝘁𝗮 𝗪𝗮𝗿𝗲𝗵𝗼𝘂𝘀𝗲: it centralizes and consolidates large amounts of data from multiple sources.

Hive

Redshift

𝗟𝗮𝗻𝗴𝘂𝗮𝗴𝗲𝘀:

Sql

Java

Python,

Scala

𝗠𝗲𝘀𝘀𝗮𝗴𝗲 𝗤𝘂𝗲𝘂𝗲 - A pub-sub

Kafka

RabbitMq

𝗙𝗶𝗹𝗲 𝗙𝗼𝗿𝗺𝗮𝘁: designed for efficient data storage and retrieval

Parquet

ORC

𝗦𝗲𝗮𝗿𝗰𝗵 𝗲𝗻𝗴𝗶𝗻𝗲- Mostly based on the Lucene library. It provides a distributed, multitenant-capable full-text search engine with an HTTP web interface

Elastic Search

Solr

𝗜𝗻𝘁𝗲𝗿𝗮𝗰𝘁𝗶𝘃𝗲 𝗾𝘂𝗲𝗿𝘆: Fast and Reliable SQL Engine for Data Analytics

Presto

Athena

𝗗𝗲𝗹𝘁𝗮 𝗟𝗮𝗸𝗲 :

Delta

Iceberg

𝗖𝗹𝗼𝘂𝗱 𝗣𝗿𝗼𝘃𝗶𝗱𝗲𝗿 :

AWS

Azure

GCP

𝗦𝘁𝗼𝗿𝗮𝗴𝗲 : Massive amount of information gets store

HDFS

S3

GFS

ADLS

𝗪𝗼𝗿𝗸𝗳𝗹𝗼𝘄 𝗺𝗮𝗻𝗮𝗴𝗲𝗺𝗲𝗻𝘁: easy to write, schedule, and monitor workflows.

Airflow

Oozie

𝗥𝗲𝘀𝗼𝘂𝗿𝗰𝗲 𝗠𝗮𝗻𝗮𝗴𝗲𝗿 - distributed resource management that manages resources among all the applications in the system

Yarn

Mesos

𝗙𝗮𝘀𝘁 𝗜𝗻𝗴𝗲𝘀𝘁𝗶𝗼𝗻: ingest massive quantities of event data and provide low-latency queries

Druid

Pinot

𝗠𝗼𝗻𝗶𝘁𝗼𝗿𝗶𝗻𝗴: Cluster and system monitoring

Grafana

Kibana

𝗖𝗼𝗻𝘁𝗮𝗶𝗻𝗲𝗿𝘀 𝗮𝗻𝗱 𝗢𝗿𝗰𝗵𝗲𝘀𝘁𝗿𝗮𝘁𝗶𝗼𝗻

Docker

Kubernetes

𝗩𝗶𝘀𝘂𝗮𝗹𝗶𝘇𝗮𝘁𝗶𝗼𝗻 :

Supersets

kibana

(REF: Linkined-rocky-bhatia)